How ChatGPT will Influence the Cybersecurity Industry

With the introduction of ChatGPT, it appears that the field of cybersecurity will make a significant leap forward. The world of cybersecurity is constantly evolving. A cutting-edge AI-driven chatbot called ChatGPT is created to improve user experience and security protocols online. The future of online security will be discussed in this blog post along with how ChatGPT will revolutionize the way we interact with cybersecurity.

Technology has advanced dramatically as the world quickly transitions to the digital era. Artificial intelligence (AI) is one of the most important technological advancements and has influenced almost all facets of society. But these developments in technology have also created new difficulties, particularly in the field of cybersecurity. We will examine how ChatGPT and the emergence of AI will affect and transform the cybersecurity industry both now and in the future in this article.

The cybersecurity sector is rapidly changing because of the rise of AI. Traditional security techniques are no longer sufficient as cyberattacks become more sophisticated and frequent. AI is being used by cybercriminals to carry out more complex and powerful attacks that can easily get past traditional security barriers. Therefore, it is crucial for cybersecurity professionals to use AI to improve their defense systems.

ChatGPT’s Applicability for the Cybersecurity Space

ChatGPT is a language model that OpenAI trained with a variety of features that can be helpful in the information security or cybersecurity sector. Among these capabilities are:

- Using natural language processing to produce responses that are human-like. This can be helpful in a range of cybersecurity applications, including chatbots for customer support or security analysis.

- Having a neural network architecture enables machine learning, allowing it to learn from and adjust to new data. As it can learn to recognize patterns and anomalies in huge data sets, this capability can be especially helpful in detecting and responding to security threats.

- Quickly and accurately analyze a lot of data. This can be helpful in cybersecurity applications like network traffic analysis and intrusion detection.

- Can predict events based on past data and present trends. When predicting potential security threats and creating preventative strategies, this capability can be especially helpful.

- It can be taught to perform vulnerability scanning to help cyber professionals examine systems, networks, and applications for flaws. By doing so, it will be easier to spot potential security risks and offer solutions.

- ChatGPT can be configured to automate incident response procedures such as categorizing alerts, compiling data, and elevating problems to human analysts.

- Being able to process millions of data within seconds, ChatGPT can be taught perform threat intelligence and analysis to gather, sift through, and interpret data on threats from a range of sources. Identifying new threats and creating mitigation plans can both benefit from this.

The capabilities of ChatGPT can be used to enhance the performance and effectiveness of a variety of cybersecurity applications. Due to it’s capacity for learning, adapting, and analyzing vast amounts of data, it is a potent tool in the fight against cyber threats in the age of artificial intelligence.

Benefits of Using ChatGPT in Cybersecurity

AI will improve threat detection, which is one way it will have an impact on the cybersecurity sector. To find and follow potential threats, cybersecurity experts use a variety of tools. AI-powered systems, on the other hand, can offer more precise and immediate detection, which is crucial for risk mitigation. Massive amounts of data can be processed by AI, which can also spot patterns that the human eye might miss. Threats can be analyzed and identified using ChatGPT, an AI-powered tool. ChatGPT assists cybersecurity experts in spotting potential threats and quickly responding to them by analyzing massive amounts of data and finding patterns.

AI-driven systems can also improve how cybersecurity experts react to threats. It’s essential to act quickly after a threat has been identified to limit the harm. Cybersecurity experts can use AI to analyze threats, assess their seriousness, and choose the best course of action. With ChatGPT, cybersecurity professionals can more effectively understand and respond to threats by utilizing the power of natural language processing.

AI can also be used to build sophisticated security systems that can change to counter new threats. Traditional security measures adhere to a predetermined set of guidelines. However, cybercriminals are constantly developing new methods that can easily get around these regulations. AI-powered systems can adapt to new cybercriminal tactics by learning from and studying past attacks. Cybersecurity professionals can continue to stay one step ahead of attackers by constantly learning and updating their systems.

The role of cybersecurity professionals is changing because of AI development. There will be a demand for cybersecurity experts who are knowledgeable in AI technologies as AI is used more frequently. Using AI-powered tools, cybersecurity experts will need to be able to recognize threats and take appropriate countermeasures. In addition, they will need to be able to create and maintain sophisticated security systems that employ AI to detect and address threats.

Risks of Using ChatGPT in Cybersecurity

The rise of AI in cybersecurity has its benefits, however, it also carries potential risks, just like any other technological advancement. The potential for cybercriminals to use AI to launch more effective and sophisticated attacks is one of the main worries. AI can be used by cybercriminals to find security system flaws, create more powerful malware, and automate attacks. As a result, attackers will be more challenging to stop and/or mitigate their attacks.

Lately, the cybersecurity industry has been shaken with news regarding ChatGPT being used by bad actors to generated malwares. But the question that has been in all cyber professional’s mind is, how good are these AI generated malwares?

As we know right now, ChatGPT is pretty good at providing code snippets and recommendation, as well as debugging and optimizing codes. But if we’re talking about malwares, there is only one thing that matters, code obfuscation. It’s capability to perform code obfuscation will be the turning point for how good of a malware ChatGPT can generate. But currently, there has not been any discovery about how capable ChatGPT is on performing advance feature such as code obfuscation.

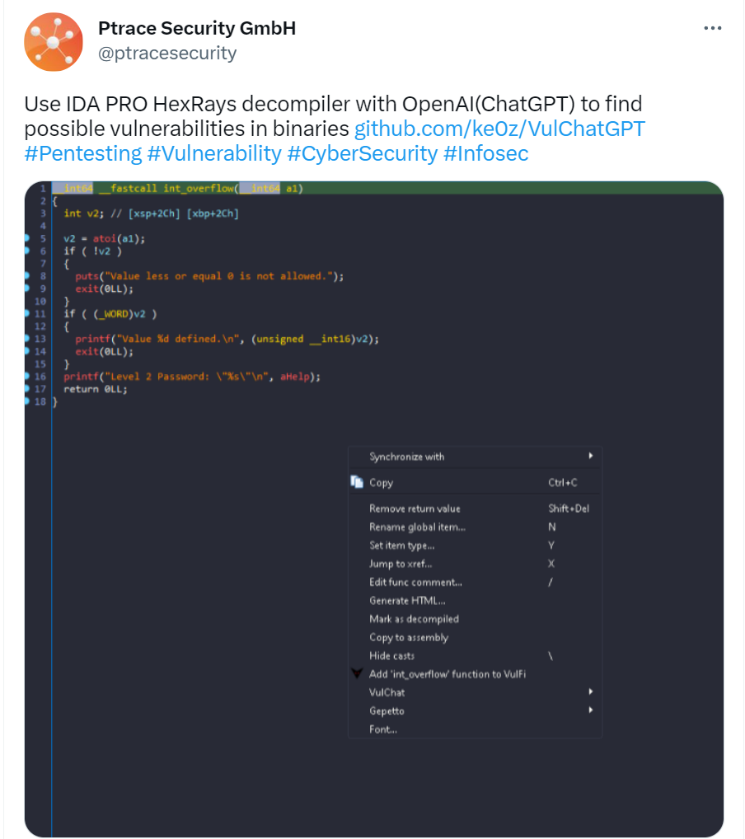

We’ve heard the news regarding it’s power combined with IDA Pro, reverse engineering the meaning of disassembled code. This only proves that the machine learning language model is not too far away from learning advance code writing.

Cybersecurity professionals must create security systems that are intended to detect and mitigate attacks launched using AI to address these potential risks. Furthermore, it is critical to guarantee that decision-making processes in AI-powered systems are auditable and transparent. This will aid in ensuring that AI-powered systems make objective judgments and are not being abused.

ChatGPT Cybersecurity Challenges

Although ChatGPT and its API have several features that can be helpful in the cybersecurity or information security sector, implementing these technologies comes with some difficulties. These difficulties consist of:

- Data Quality: Ensuring that the training data used to create the model is of high quality is one of the main difficulties in implementing ChatGPT. An incorrect or inappropriate response may result from biased, insufficient, or inaccurate data.

- Security: It’s crucial to make sure that the data being used is kept secure because ChatGPT is made to process and analyze large amounts of data. In addition to making sure the system is built to prevent unauthorized access, this also includes protecting data while it is in transit and at rest.

- Ethical Issues: As an AI technology, ChatGPT raises ethical questions about transparency, bias, and privacy. There might be issues with, for instance, how data is gathered and used to train the model or how the model’s outputs are interpreted and used.

- Technical Expertise: Implementing ChatGPT and its API requires a high level of technical expertise, including proficiency in natural language processing, machine learning, and data analysis. For businesses without internal expertise or the funds to hire outside experts, this can be a challenge.

- Integration with Existing Systems: Integrating ChatGPT and its API with already-existing cybersecurity or information security systems can be difficult, especially if those systems are out-of-date or not made to work with AI technologies.

- Scalability: ChatGPT’s capacity for handling and analyzing sizable amounts of data can be both a strength and a drawback. While the technology can be highly scalable, ensuring that it can handle large volumes of data without degrading performance can be a challenge.

Overall, implementing ChatGPT and its API in the cybersecurity or information security industry can be difficult, but these difficulties can be overcome with the right knowledge and resources. Organizations can use its API to boost the efficacy and efficiency of their cybersecurity operations by addressing issues with data quality, security, ethics, technical expertise, integration, and scalability.

Conclusion

To sum up, the emergence of AI is drastically altering the cybersecurity landscape, and it is crucial to create security systems that are built to recognize and counteract attacks carried out by AI.

ChatGPT has the potential to have a significant impact on the cybersecurity industry in a few ways as a language model with sophisticated natural language processing abilities. Its use in cybersecurity has several advantages, including its capacity to analyze massive amounts of data quickly and accurately, recognize and rank potential security threats, and produce automated responses to potential attacks.

With ChatGPT’s use in cybersecurity, there are, however, risks and difficulties as well. The possibility of hackers exploiting holes in the system is one of the main risks, especially if the model is not properly secured. The use of AI in cybersecurity is also fraught with ethical questions, particularly in relation to issues of transparency and privacy.

ChatGPT will carry on being a significant player in the cybersecurity sector despite these obstacles. We’ll probably see even more sophisticated AI models used to identify and stop cyber threats as technology develops and improves. For the foreseeable future, ChatGPT will continue to be a useful tool for cybersecurity professionals, even though it has limitations, particularly when it comes to more complex threats.